Surgeries Were Being Cancelled Midway

The same report kept coming in from ground support: surgeons were quitting on the robot mid-surgery and switching back to manual. I needed to understand why, so I ran three research tracks:

1:1 Interviews

Surgeons, surgical assistants, and circulating nurses across multiple hospitals

Diary Studies

Staff documented friction points and workarounds over 4 weeks of daily use

OR Observations

Live observation of surgical teams interacting with the GUI during real procedures

I grouped everything by severity. Three problems showed up in 85%+ of sessions and were the direct cause of cancellations:

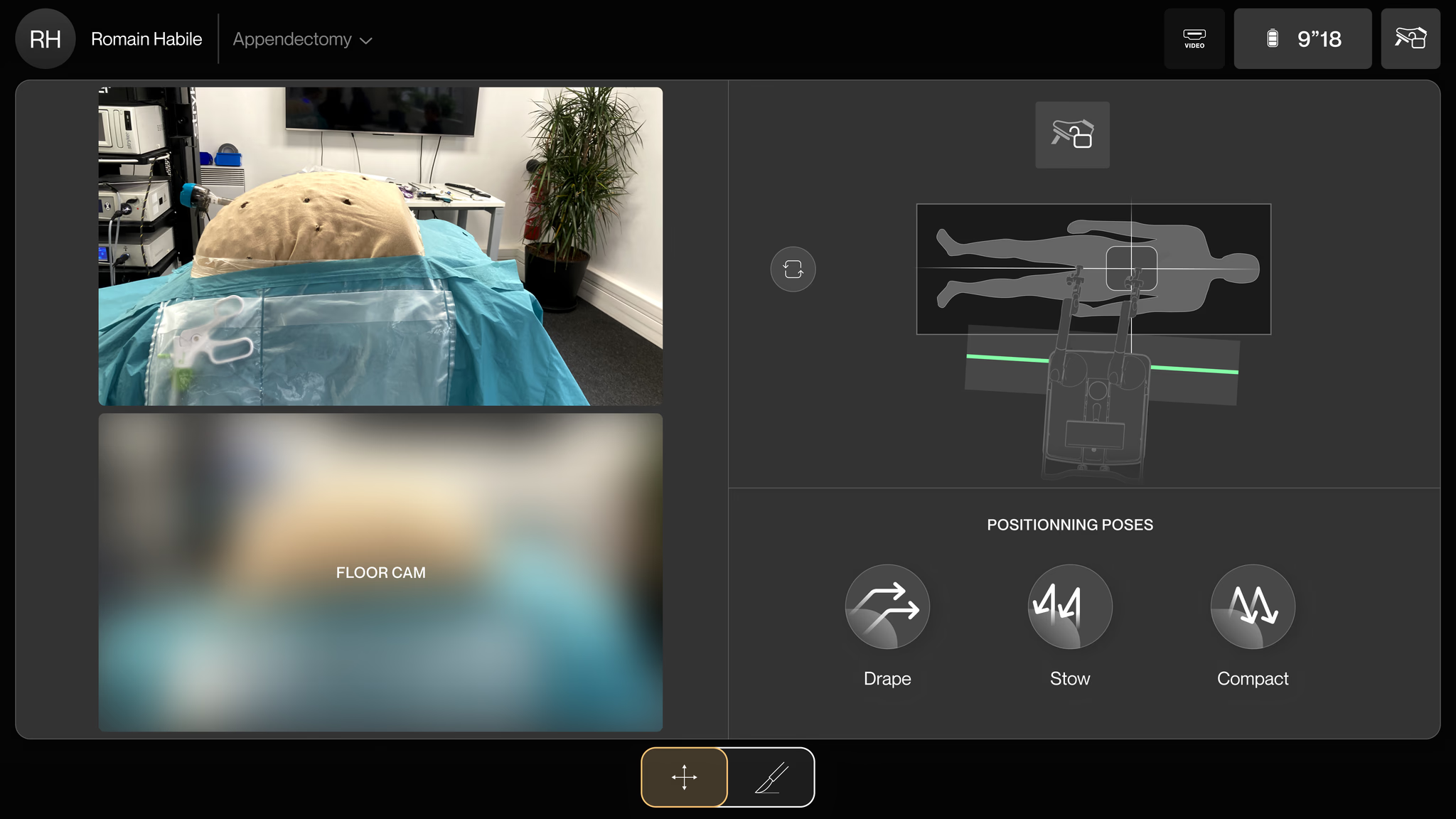

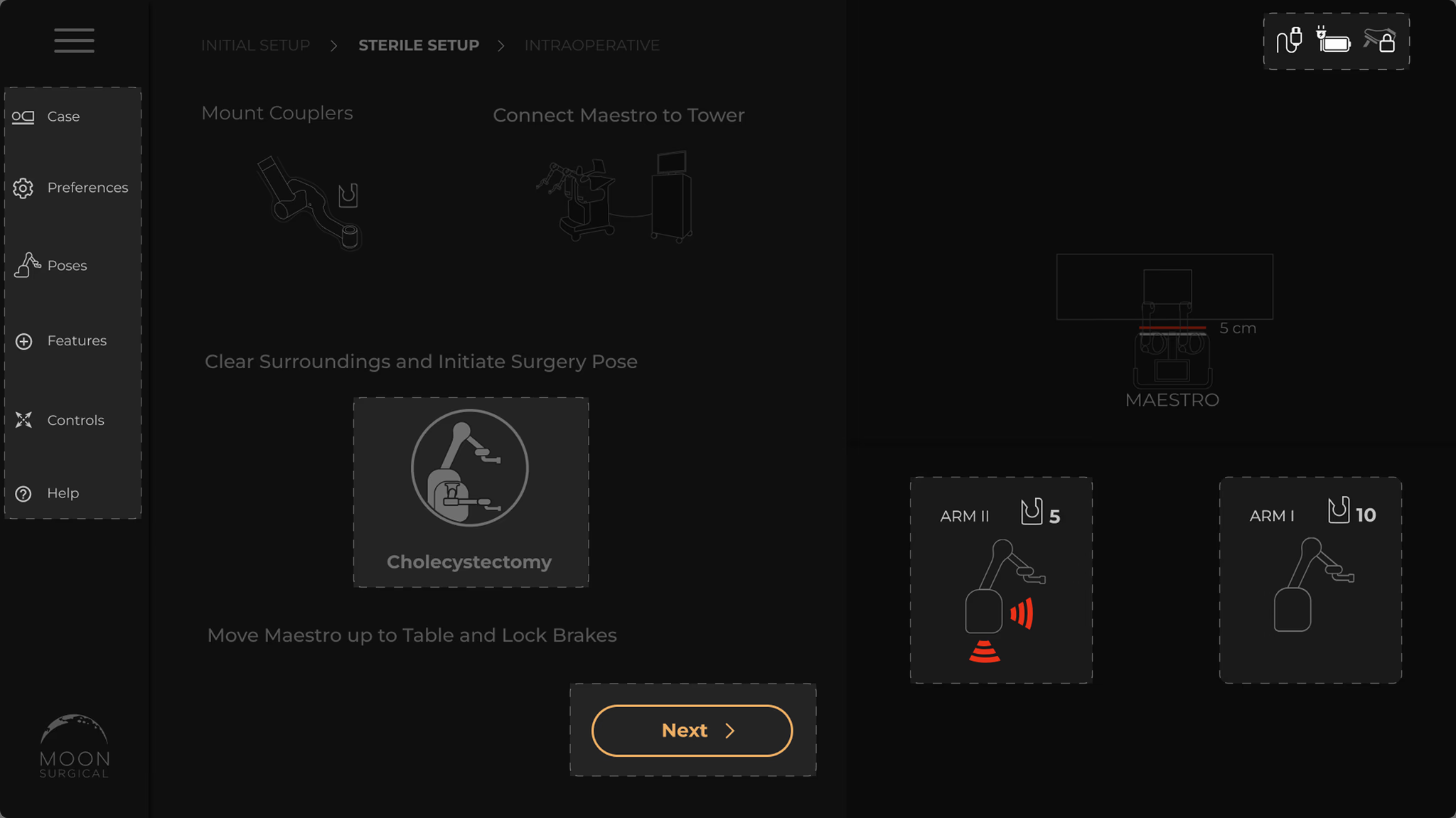

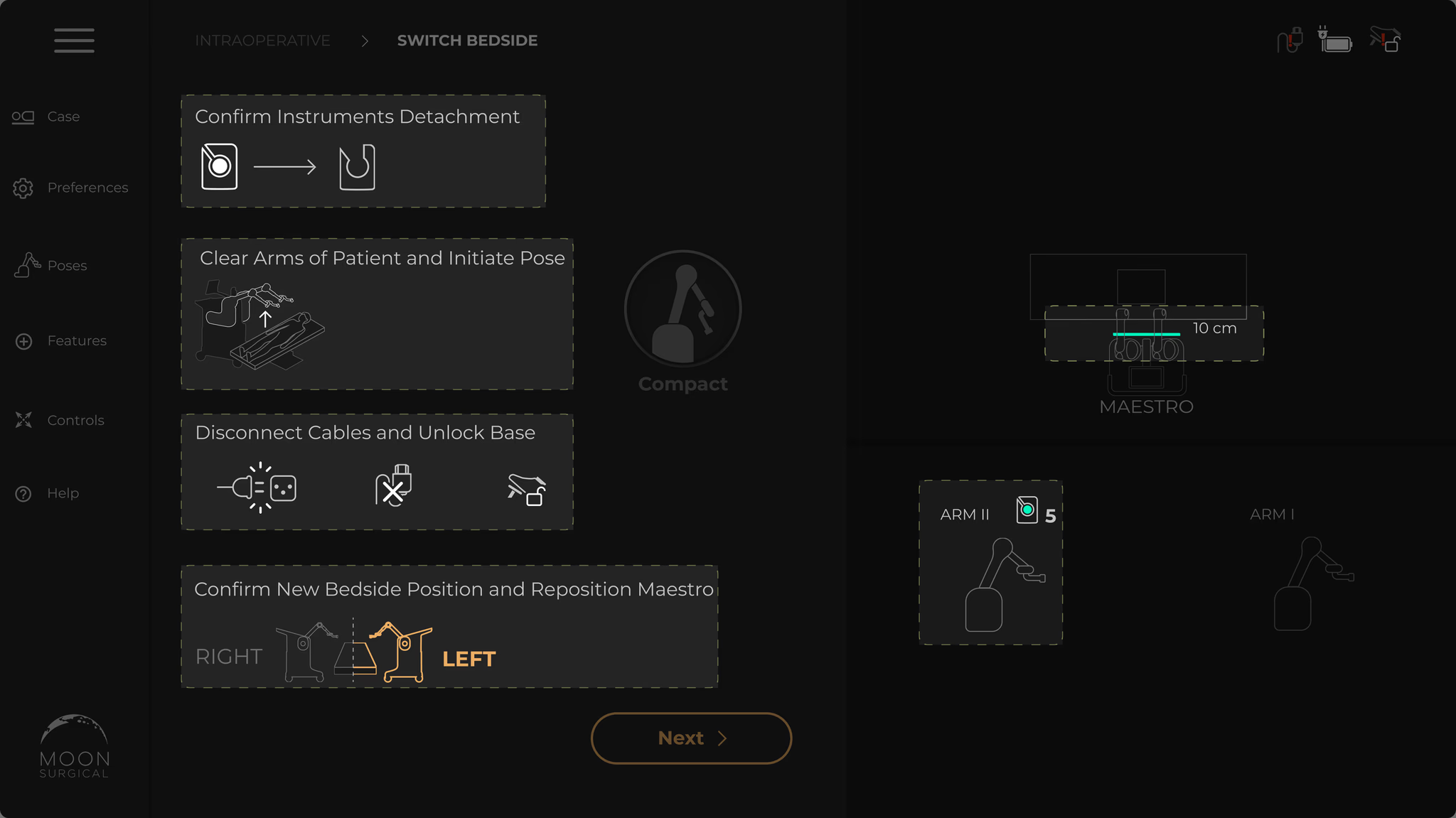

Excessive touch targets in a linear flow

Setup should be three steps. But the screen was packed with buttons, sliders, and toggles that weren’t needed yet. People hesitated. They hit the wrong control. Cognitive fatigue in an OR is dangerous.

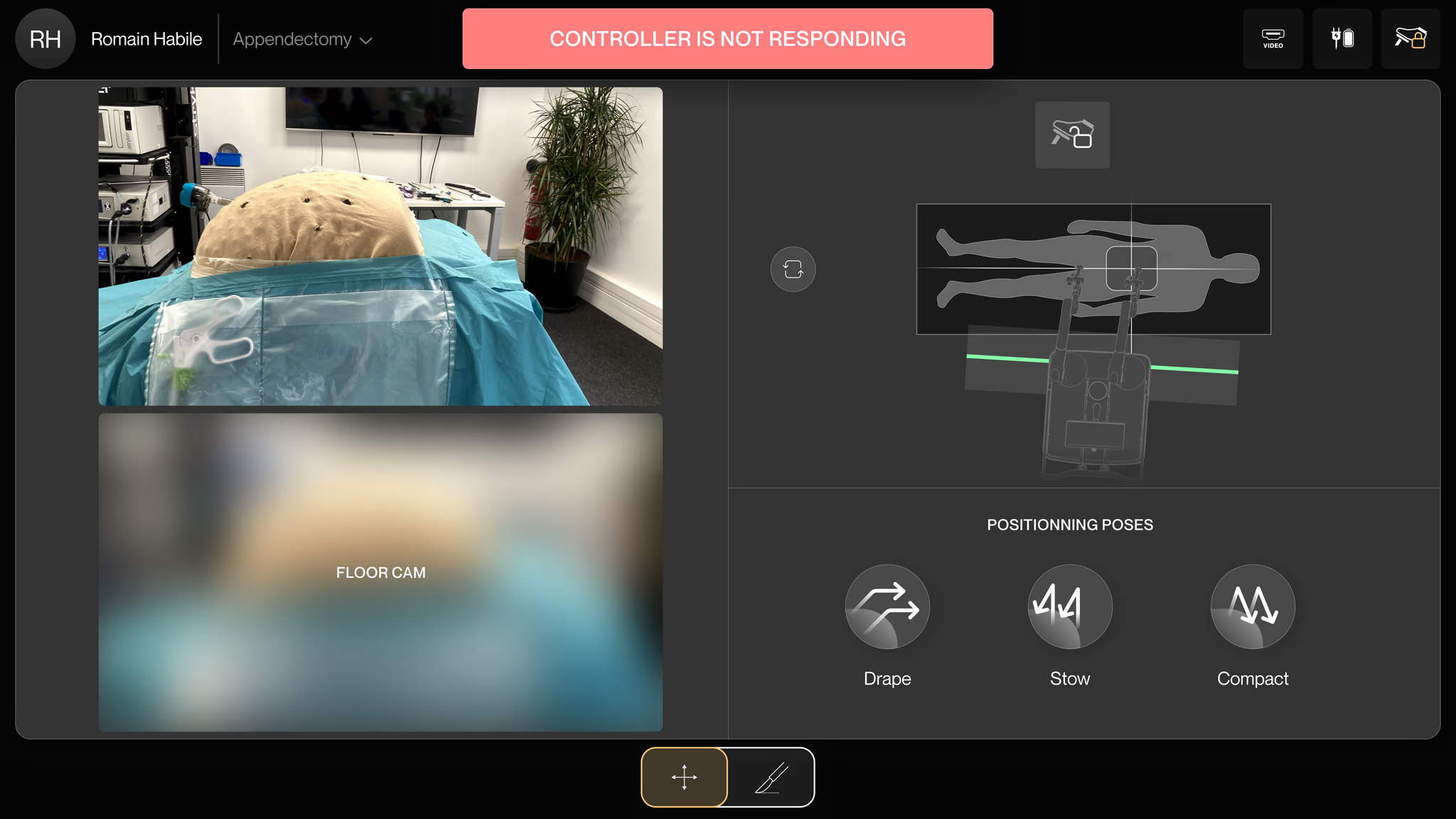

Unclear instructions with no visual hierarchy

The instructions were walls of text. Nobody reads paragraphs while setting up a surgical robot. People didn’t know what to do next, backed up, tried again. Setup took twice as long as it should.

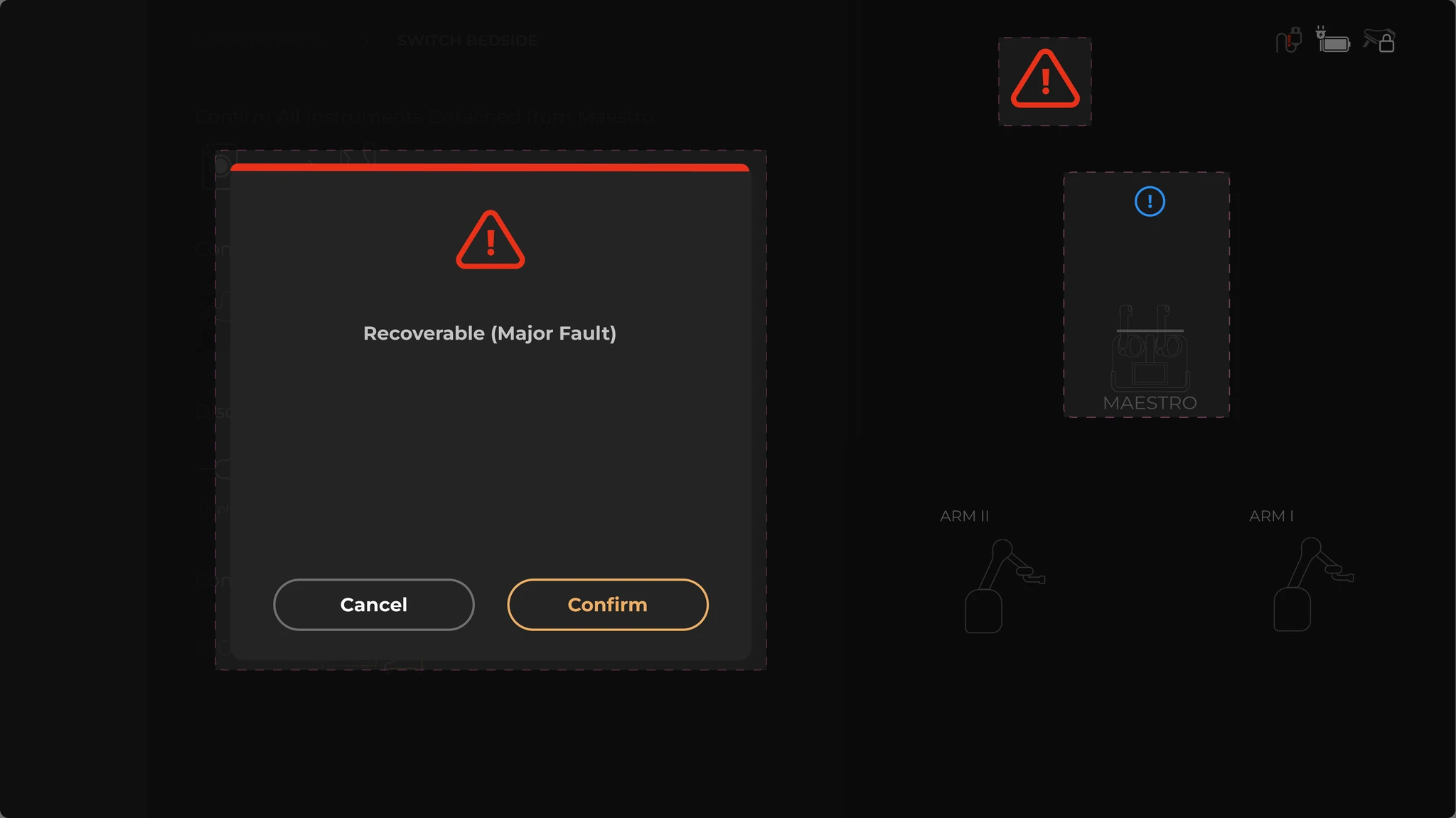

Fault modals with no context or recovery

A fault occurs and a modal takes over the entire screen. No explanation. No recovery path. The only option: restart the whole system. With a patient on the table. That’s how you lose a surgeon’s trust for good.